If you are learning or new to DevOps and infrastructure automation, this article will help you learn about the Immutable infrastructure model in detail.

Before getting into a technical explanation, first, you should have a clear understanding of the literal meaning of words mutable and immutable.

- Mutable: Something that can be changed. Meaning you can continue to make changes to it after it is created.

- Immutable: Something that cannot be changed. Once it is created, you cannot change anything in that.

Now let's look at a real-world example of a house. In a house, there are objects you can change (mutable) and objects that must be replaced (immutable) if something happens to them. For example, you can paint a different color to a door, change door handles and give it a different look. It is a mutable object. At the same time, a washbasin is an immutable object. If you want to change the color of the washbasin, you need to replace it with a new one. The same applies to a floor tile as well.

In the IT world, we have the concept of mutability and immutability both in software engineering and DevOps. In software engineering, the concept is applied in Object-oriented programming, and in DevOps, it is applied to infrastructure automation. In this guide, we are focussing on immutable infrastructure from a DevOps standpoint.

What is Immutable Infrastructure?

To understand immutable infrastructure, first, you should know the lifecycle of a server.

Here is the high-level lifecycle of a server with an application (Just for reference. The process varies from org to org.)

- Deploy a server

- SSH into the server.

- Install required utilities.

- Configure security agents, firewalls, and utilities for security. (Security hardening)

- Install and configure the required application.

- Make application and server configuration changes to improve application performance.

- Make the server live for production workload.

- Every month log in to the server and patch the server for security (server updates).

- Perform an application upgrade when it is available.

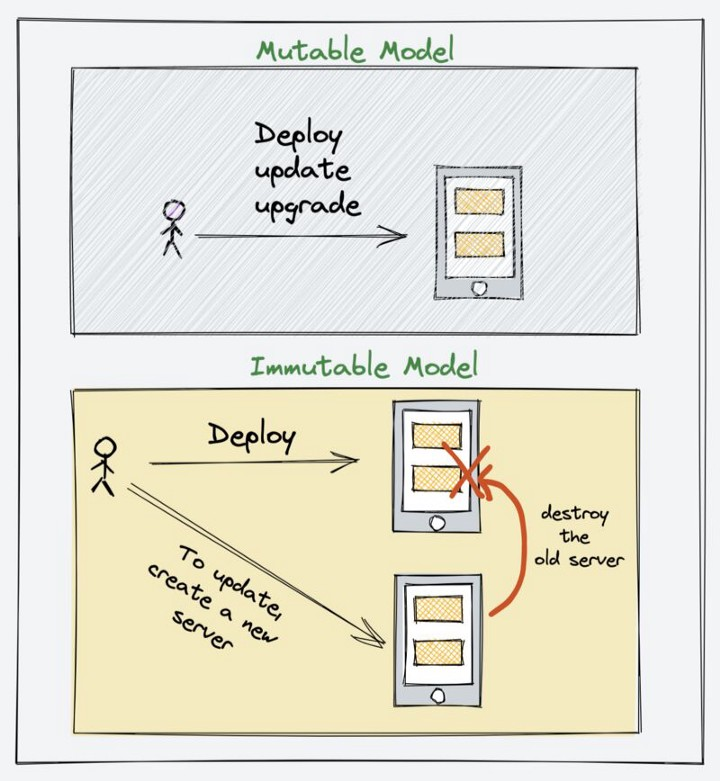

As you can see, the steps mentioned above are of a mutable model. This is because we are making changes to a server as per the requirements. So when you manage servers using configuration management tools like Ansible, Puppet, or Chef, you follow a mutable model.

Immutable — Like its literal meaning, Immutable infrastructure is a concept where you don’t make any changes to the server after you deploy it. Meaning the server gets deployed with preconfigured configurations, utilities, and applications. The moment the server comes up, the application starts running.

If you want to make any changes, the existing services should be destroyed and replaced with a new one. A change could be patching, application upgrade, server configuration change, etc.

You can follow the immutable infrastructure model for most modern applications, including database clusters.

For example, if you have applications running in autoscaling groups, you follow an immutable server deployment model. Whenever you want to deploy new code, you need to destroy the existing VMs so that the new ones launched by autoscaling will download the latest code. Another method is you need to change the launch template with the latest image with code.

In an immutable model, standard best practices should be followed in terms of configurations.

For example, externalizing commonly changed configurations using a config store or a service discovery tool. A classic example would be the Nginx upstream configuration.

This way, you don’t have to bounce off a server for minor changes and configurations.

If you are aware of containers, it is the best example of immutable infrastructure. Any change to a container results in a rebuild except for externalized configurations.

Immutable Infrastructure Model For CI/CD

So how does the immutable infrastructure model fit the CI/CD process?

When you follow an immutable infrastructure mode in the CI pipeline for VM environments, a deployable artifact would be a Virtual machine image or a docker image.

For example, once CI is done, with tools like Docker or packer, you can bake the application code in a container or a VM image (AWS AMI) and use it to deploy it in the relevant environments.

When it comes to deployment, you can follow either blue-green or canary deployment. Let's have a look at both approaches.

- Blue-green Model: In this model, using the latest application image you deploy a new set of servers (blue) along with the production servers (green) but it won't serve the traffic. Once the servers are validated, traffic will be redirected to the new set of servers and the old ones will be destroyed.

- Canary Model: In this model, instead of sitting the entire traffic to the new set of servers, only a subset of traffic is directed to the new servers. The traffic switch happens gradually based on the timeframe decided by the teams. Once the traffic is fully switched to the new set of servers, the old one gets deleted.

Also, I have created a video explaining immutable infrastructure.

Image Lifecycle Management & Patching

In the immutable infrastructure model, VM or container image creation and patching play a key role. You need to have a well-defined image lifecycle management integrated with a CI/CD tool.

When you start working on cloud & container environments, you would choose a base image of your choice and start playing around with it manually or through automation.

In actual project environments, it is not that straightforward.

I want to shed some light on how it happens in an actual project environment and how application images are built as per organizational security policies.

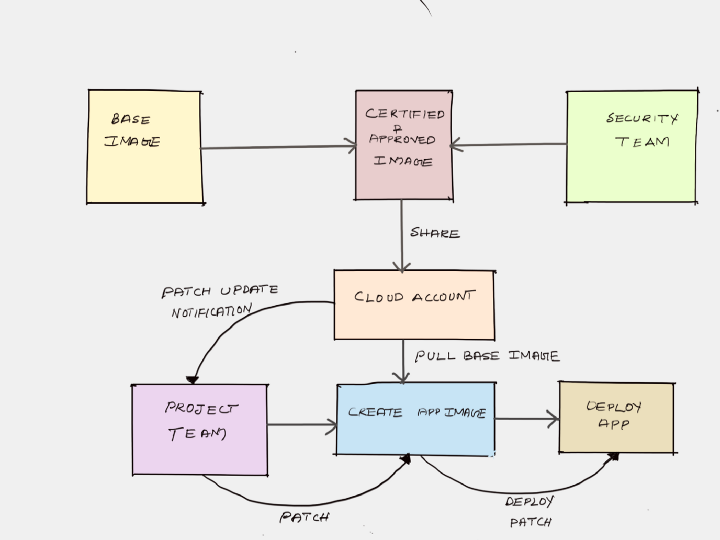

So, here is a list of generic image life cycle management (VM & Containers) steps followed in secured project environments.

- In a secured, compliant environment, you are not allowed to use the base images provided by the cloud provider or docker base images available in public container registries like docker hub.

- Every organization creates VM/container base images with standard security tools (agents), DNS/Proxy, LDAP configurations, etc. (It changes as per each organization’s security policy). Normally this image is created and maintained by a central platform team or security team. You can call it golden images.

- The approved and certified base images will be shared with all the teams in the organizations. It could be a single cloud account or shared with multiple child accounts within the organizations.

- Then each team can create its own images with applications on top of the approved base images. (Tools like Docker & Packer are used here)

- The new image created by the teams will be tested and deployed in production.

- Now, when the base images get new updates or patches, a new version of the base image is released and notified to all project teams by the platform or the enterprise security team.

- Every organization has a patching lifecycle. Meaning, there are guidelines set by security teams on applying the updates and patcher to VMS. For example, it could be one month or once in three months.

- For Virtual machines, patching could be “in-place,” meaning patching the existing instance, or it could be immutable — meaning replacing the existing one with a new image. Containers are immutable by its nature.

- Based on the patching lifecycle, every team will update the existing application images with the new base image and deploy it to production irrespective of whether the application code has changed or not. It applies to both virtual machines and containers.

Conclusion

We have looked at the key concepts of immutable infrastructure. It is one of the key elements when it comes to infrastructure as code.

As a DevOps engineer, you should follow all the standard best practices while building and deploying immutable images to reduce the attack surface.

If you are using containers or container orchestration tools like Kubernetes, you are already following an immutable model for application deployments.