In our last post, we wrote about setting up docker containers as build slaves. Integrating docker into your build pipeline has lots of advantages. Especially when it comes to ECS cluster based build slave setup, the advantages are even more. Few of them are,

1. In teams where continuous development happens, most the time the slave machines will be idle. By using ECS you can save cost by reducing the jenkins slave machines. The cluster capacity increases when there is a need for more resources.

2. Manage build environments in containers with tagged versions as compared to installing several versions of an application on the same machine.

3. Optimal usage of system resources by spinning up containers only when needed.

Configuring ECS Cluster As Build Slave

In this post, we will guide you to setup jenkins slaves on an ECS cluster. In this setup, we have the following.

1. A working jenkins master(2.42-latest) running on a container, set up under an ELB.

Note: You can follow this article for setting up the latest Jenkins server using a container.

2. An ECS cluster with 2 Container instances. You can decide on the container instance capacity based on your project needs.

Note: It is better to have an ASG configured for the instances to scale the ECS cluster on demand.

Prerequisites

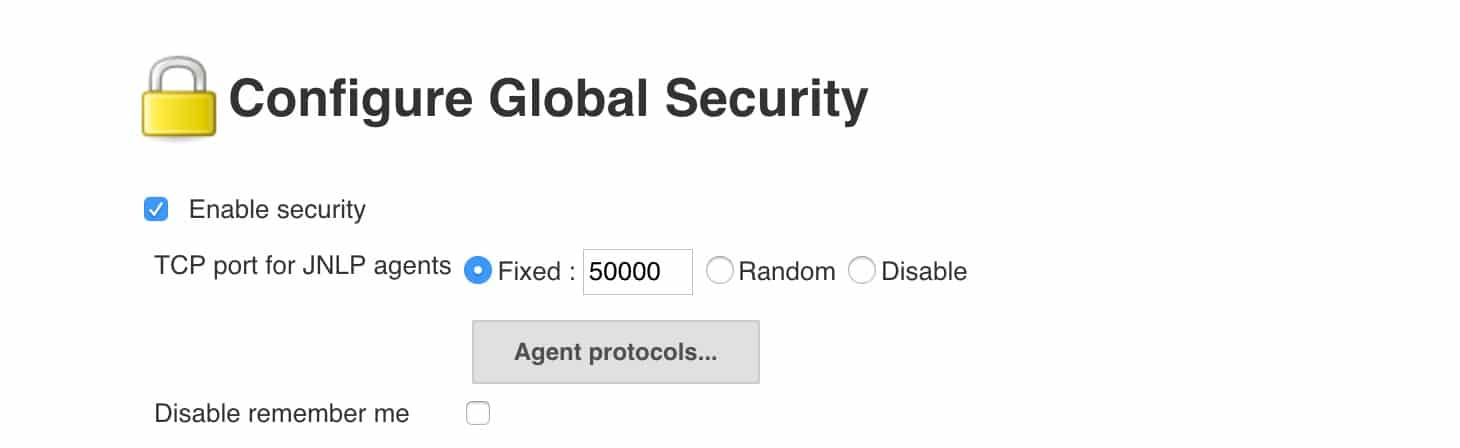

1. In ec2 and ELB security groups, you should open the jnlp (50000) port for container slaves to connect to jenkins master.

2. Go to Manage Jenkns --> Configure Global Security, check,Enable Security Option check fixed and enter 50000 as shown in the image below.

Follow the steps given below for configuring jenkins with your existing ECS cluster.

Install Amazon EC2 Container Service Cloud Plugin

1. Go to Manage Jenkins --> Manage Plugins and search for Amazon EC2 Container Service Plugin

2. Install the plugin and restart it.

Configure ECS settings on Jenkins

To integrate ECS with the jekins master, jenkins should have AWS SDK access. You can enable this in two ways.

1. You can add an IAM role with EC2 Container service Full Access to the instance where you have installed the jenkins server.

2. You can add AWS access key and secret key to the Jenkins credentials and use it with the ECS configuration. If you are running jenkins in a container outside ECS, this will be the only available option. If you are running your jenkins server in ECS, then you can assign it a task role having privileges to ECS cluster.

In this tutorial, I am using the access key and secret key stored in Jenkins AWS credentials.

Follow the steps given below for configuring the ECS plugin to integrate the ECS cluster.

1. Go to Manage Jenkins --> Configure System and search for cloud option.

2. Click Add a new cloud dropdown and select Amazon EC2 Container Service Cloud

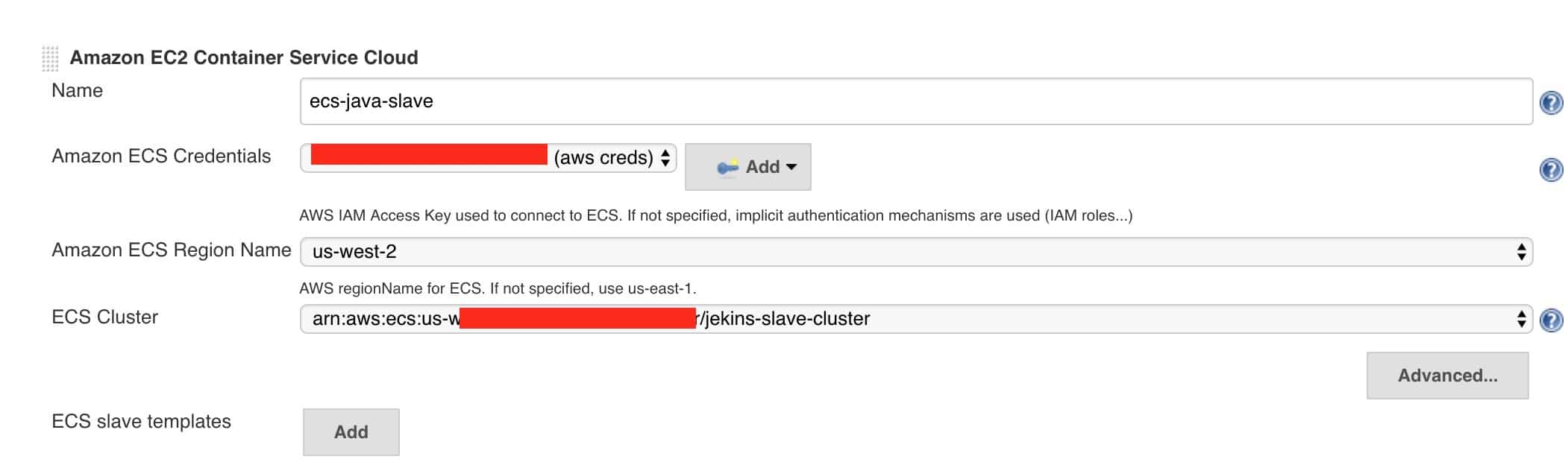

3. You need to fill up the following details.

Name: User defined name.

Amazon ECS Credentials: If you are using AWS access keys, select the relevant credential. If you using AWS role, leave it empty. The cluster will get listed automatically.

Amazon ECS Region Name: AWS region where you have the cluster.

ECS Cluster: Select your ECS cluster from the drop-down.

An example is shown below.

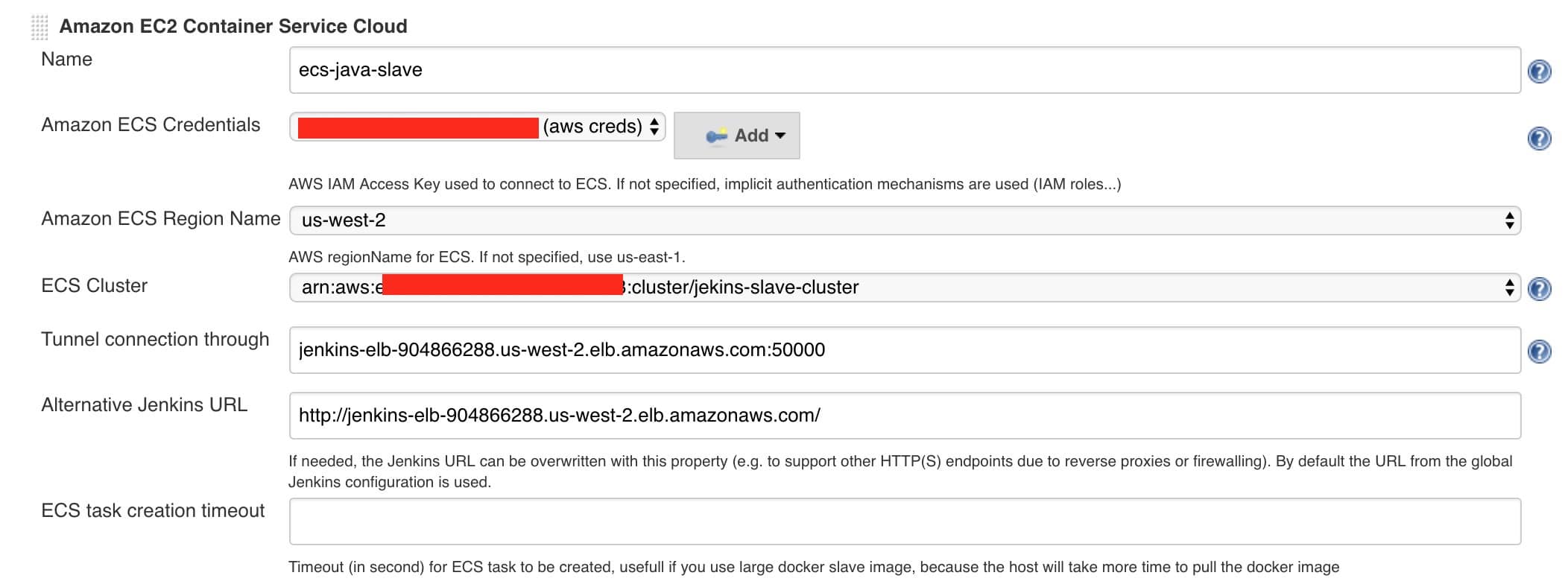

If you are running jenkins master under an ELB, you need to add the tunnel configuration in the advanced section.

The Tunnel connection through option should have the elb URL followed by the JNLP port as shown below.

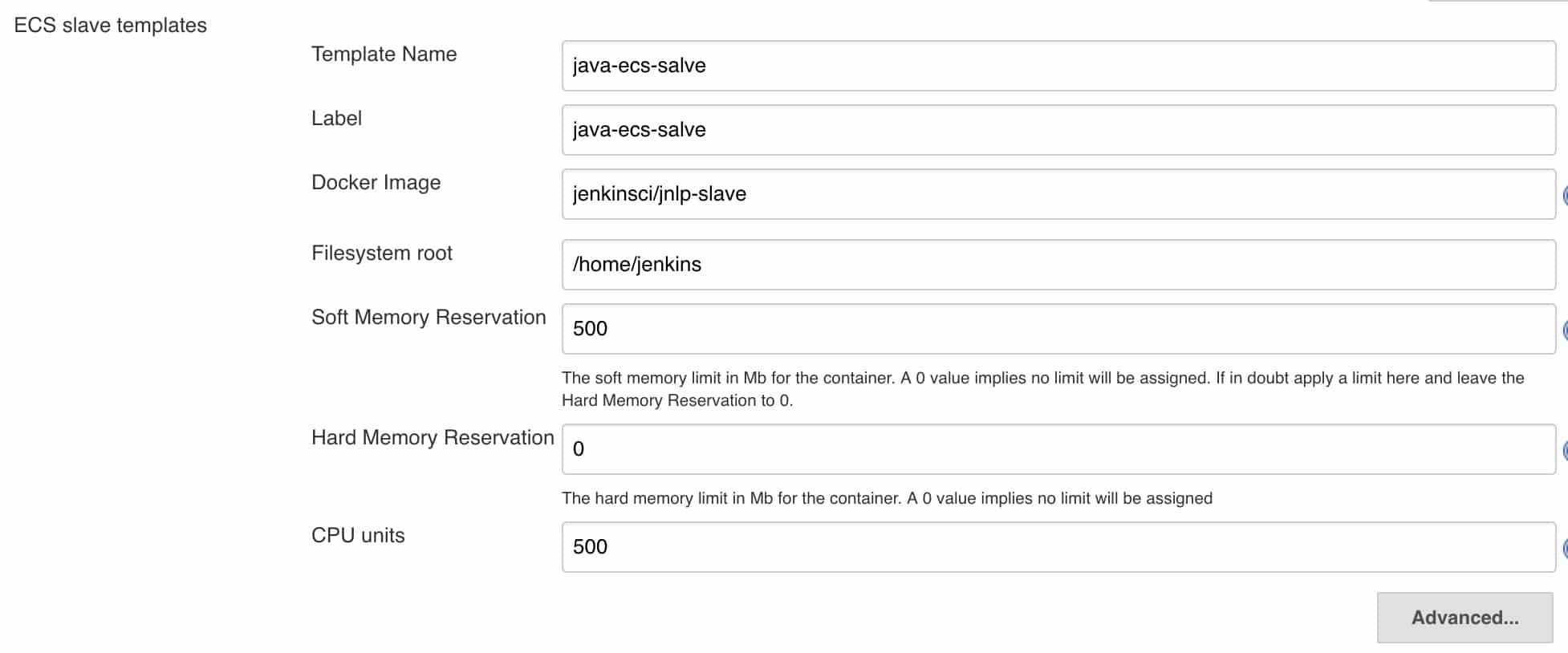

4. Next, you need to add a slave template with a docker image which acts as a slave node.

To do this, under ECS slave templates in the cloud configuration, click add and enter the details. In the following example, I have added a java JNLP slave image with label java-ecs-salve. Label is very important, because, we will use the label name in the job to restrict the job to run on containers.

After filling out the details, save the configuration.

Test The Configuration

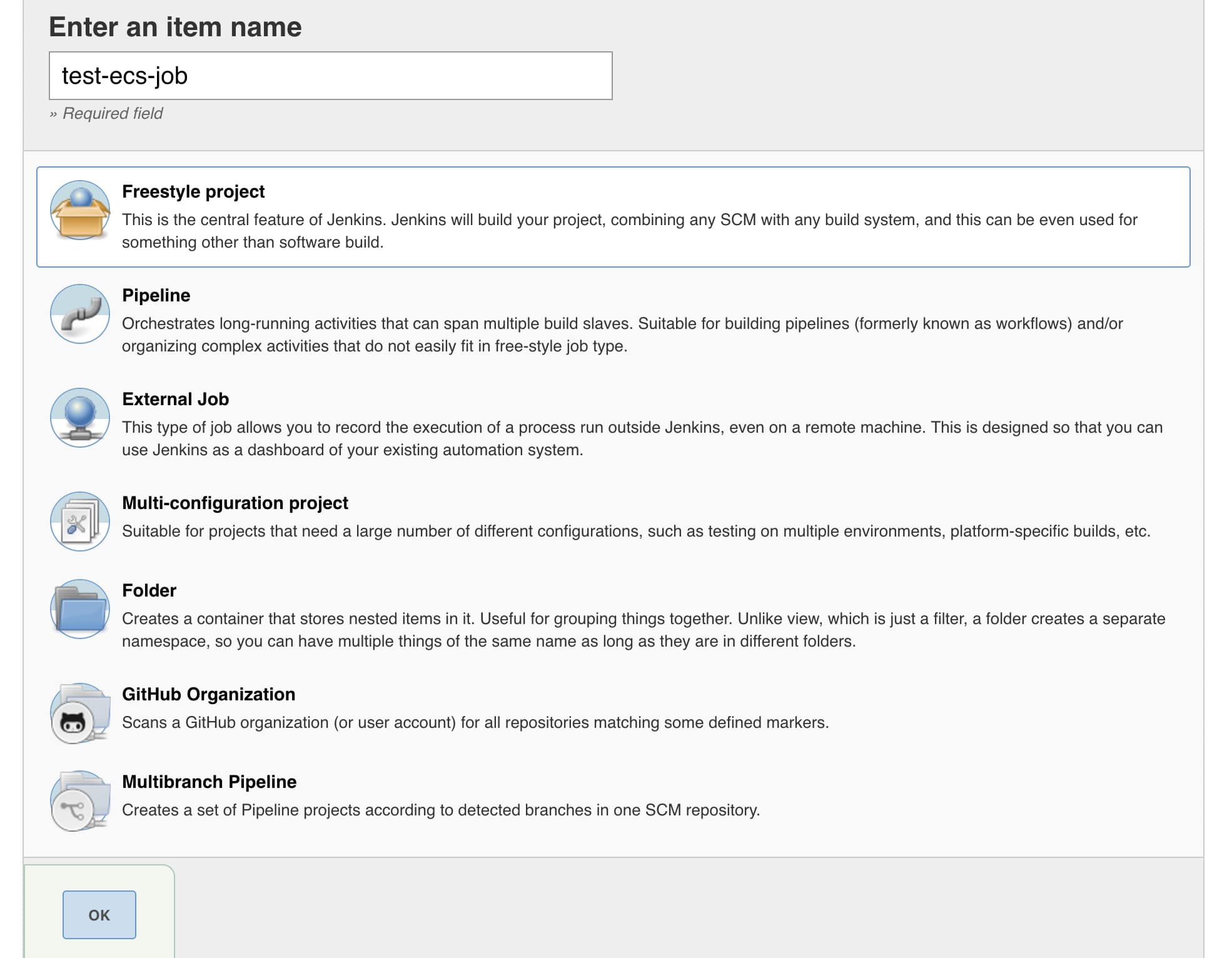

To test the configuration, we will create a Freestyle project and try to run it on ECS.

1. Go to New item and create a freestyle project.

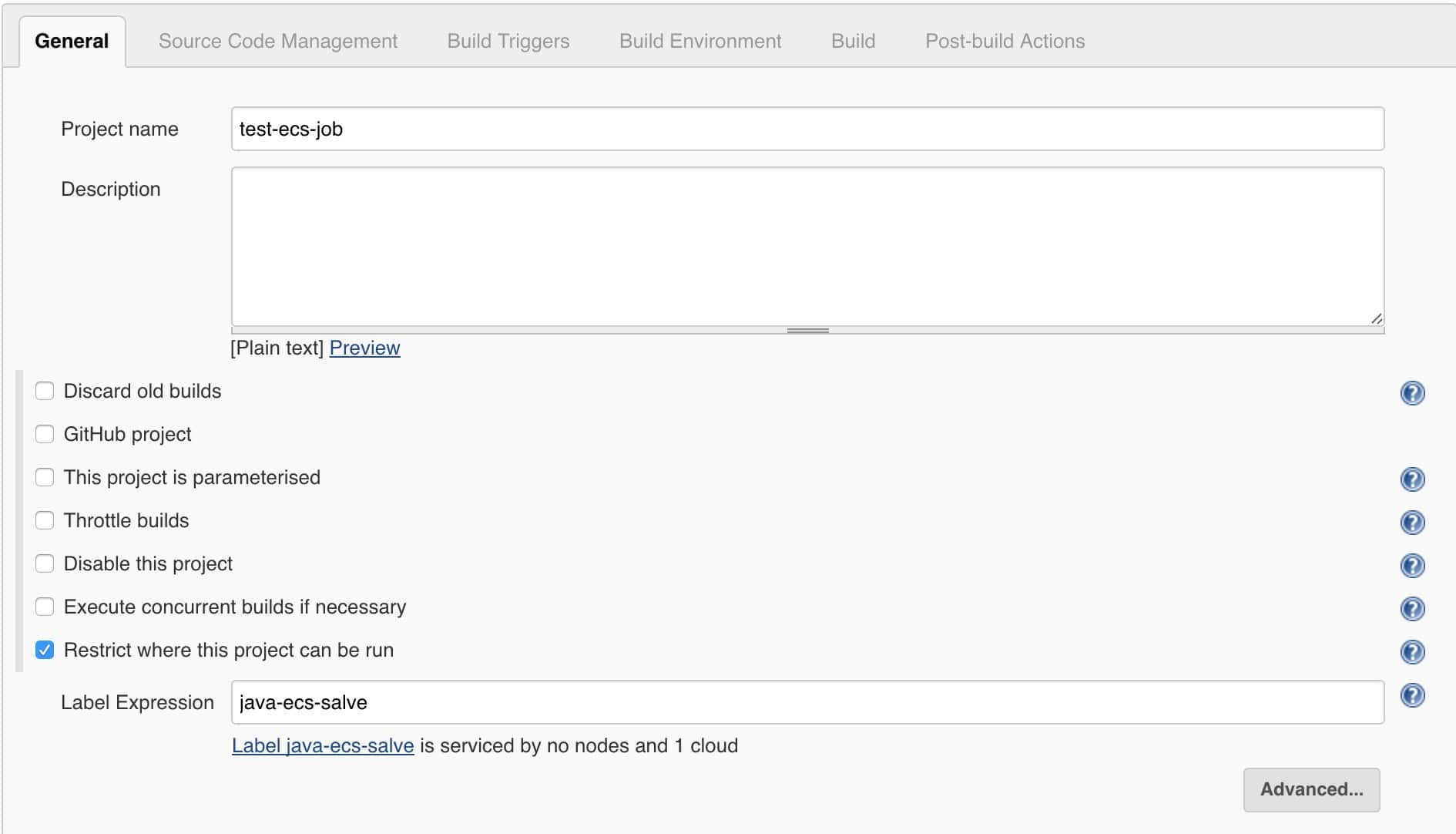

2. Under Restrict where this project can be run type the label name you have given in the slave template as shown in the image below.

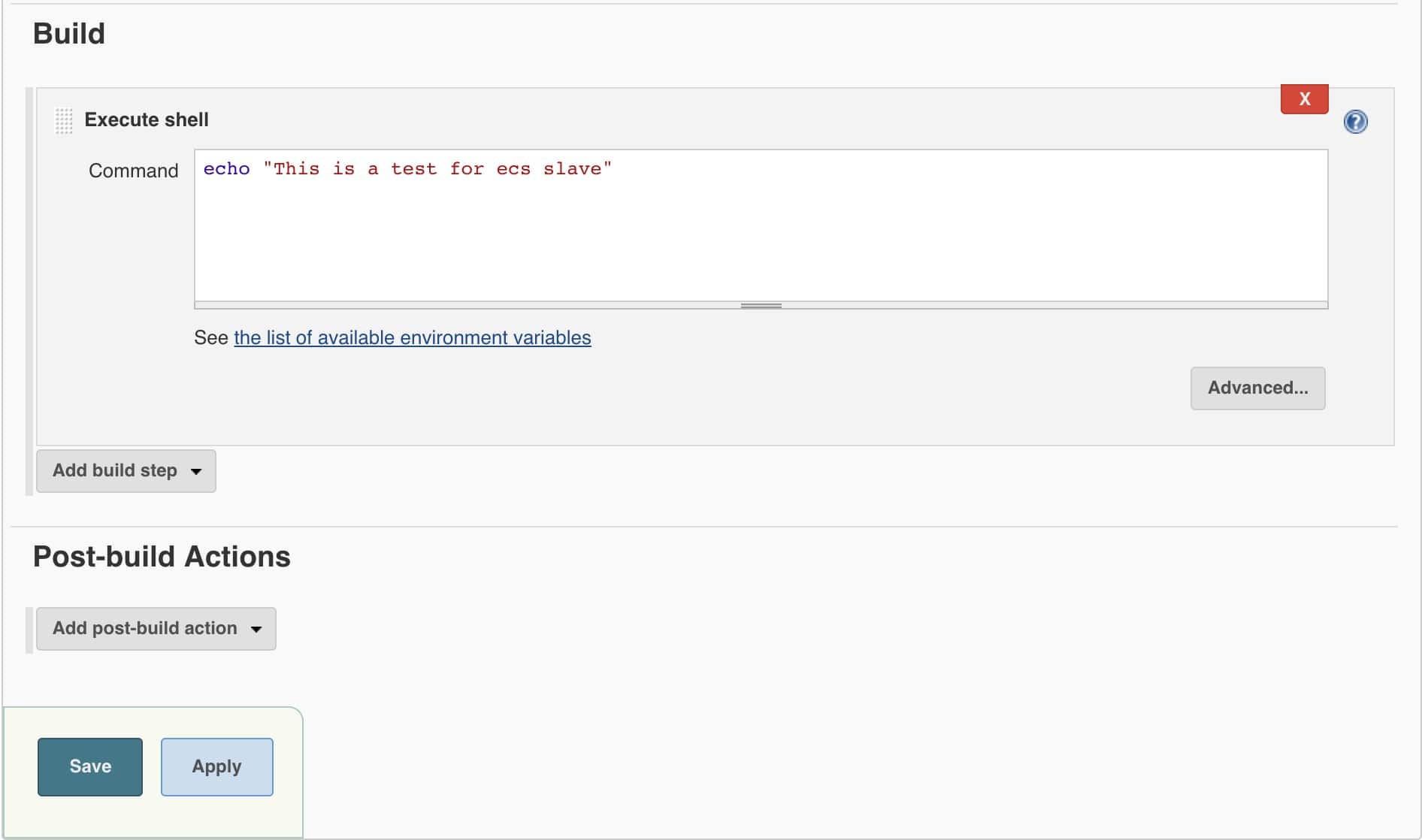

3. Under Build select the execute shell option and type an echo statement as shown below.

echo "This is a test for ecs slave"

4. Save and job and click build now

The build will go the pending start once it deploys the container. Once it executes the shell the container will be destroyed from ECS.

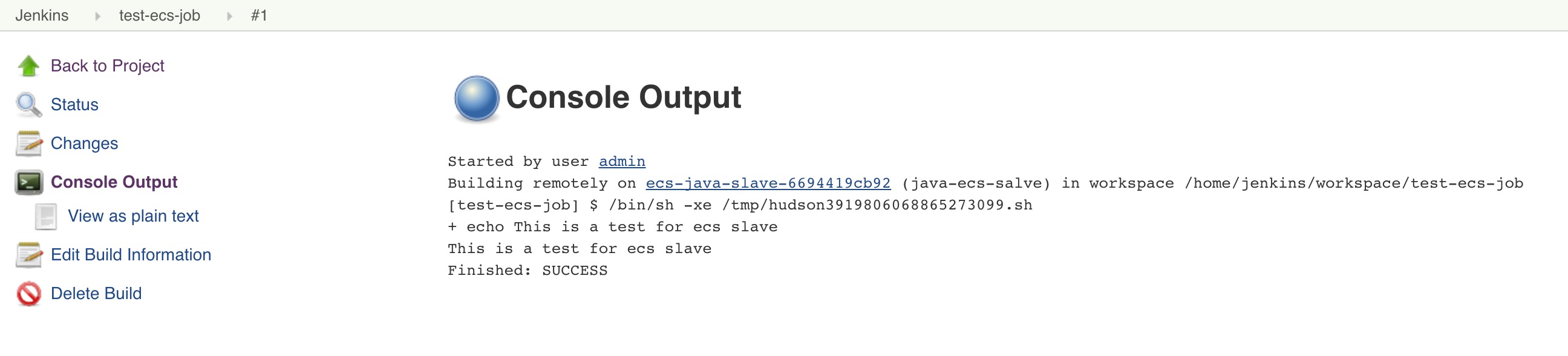

If you click the console output, you should get the following output.

Once you tested your configuration, you can create actual jobs by integrating with git and the build steps that you want. You need to configure the docker image based on your requirement.

For java based projects you can use the following cloudbees image as slave templates. It contains most of the java build dependencies.

1. https://hub.docker.com/r/cloudbees/jnlp-slave-with-java-build-tools/~/dockerfile/

For projects other than java, you can use the following cloudbees JNLP image we used in the demo as the base image.

1. https://hub.docker.com/r/jenkinsci/jnlp-slave/

ECS Cluster As Build Slave will save time and cost. If you have your applications of AWS, it is worth giving it a try. You can start with few applications and once you gain confidence, you can move the entire build fleet to ECS container based build pipelines.

Contact us at contact@devopscube.com or leave a comment for any help with this implementation.